NVIDIA NIM Integration

Launch optimized AI inference with NVIDIA NIM microservices. AI Controller automates deployment, scaling, and health monitoring so teams can focus on delivering better customer experiences.

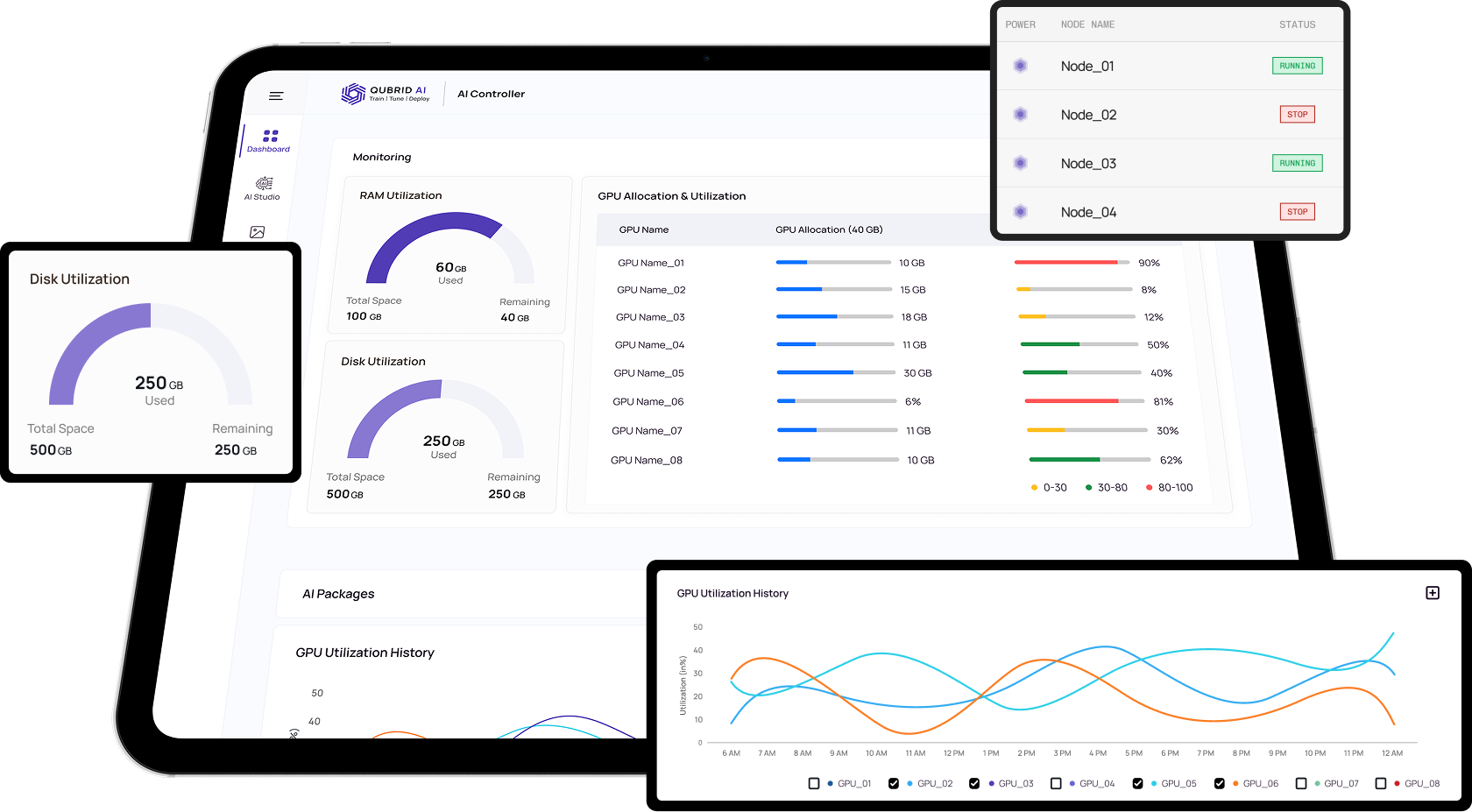

Deploy, Monitor & Create Knowledge Bases and Custom LLM infrastructure at any scale.

Empower infrastructure and AI teams with a management layer designed for GPU-aware orchestration, governance, and visibility.

Launch optimized AI inference with NVIDIA NIM microservices. AI Controller automates deployment, scaling, and health monitoring so teams can focus on delivering better customer experiences.

Keep runtime environments consistent across teams with automated dependency resolution, version pinning, and proactive updates for your deep learning toolchain.

Launch optimized inference pipelines with NVIDIA NIM microservices. AI Controller automates deployment, scaling, and health monitoring so production teams can focus on customer experience.

Configure vector search and build Retrieval-Augmented Generation workflows to deliver responses grounded in your own data.

Fine-tune foundation models using domain-specific datasets to improve accuracy and adapt AI to your unique business use cases.

An enterprise software solution for your on-premise servers. Controller software provides IT administrators a single pane of glass into their GPU infrastructure with ability to control GPU resource and AI model allocation to enterprise users, monitor usage, update drivers, and more.