Claude Opus 4.7 vs 4.6: What Actually Changed?

Anthropic released Claude Opus 4.7 on April 16, 2026, just 70 days after Opus 4.6 shipped on February 5. Both models carry the same $5/$25 per million token pricing. Both are positioned as the company's most capable generally available model for complex reasoning and agentic coding. So what actually changed, and does it matter for your production workloads?

This is a technical breakdown of the differences between Opus 4.6 and Opus 4.7, with benchmark data, use case recommendations, and migration guidance for teams running either model in production.

👉 Try both models on Qubrid AI: https://platform.qubrid.com/models

Release Context: Why Two Opus Models in 70 Days?

Opus 4.6 launched on February 5, 2026, as a major upgrade to Opus 4.5. It introduced the 1M token context window for Opus-class models, adaptive thinking, effort controls, and state-of-the-art performance on agentic coding benchmarks like Terminal-Bench 2.0. The model was positioned for sustained, long-running work, particularly in coding, finance, and legal workflows.

Opus 4.7 arrived 70 days later, on April 16, 2026. Anthropic positioned it as a "notable improvement on Opus 4.6 in advanced software engineering, with particular gains on the most difficult tasks." But the release also came with a specific context: Opus 4.7 is the first model in Anthropic's Project Glasswing framework, designed to test new cybersecurity safeguards before deploying Mythos-class capabilities more broadly.

The timing matters. Between the two releases, users reported that Opus 4.6 had "quietly gotten worse" complaints centered on regression in complex engineering tasks. Anthropic denied deliberately scaling back the model to redirect compute to other projects, but the perception created pressure for a clear upgrade.

High-Level Positioning

Opus 4.6 was marketed as:

State-of-the-art on agentic coding (Terminal-Bench 2.0)

Best-in-class on economically valuable knowledge work (GDPval-AA)

First Opus model with 1M token context window

Stronger long-context retrieval and reasoning than Opus 4.5

👉 Try Claude Opus 4.6 on the Qubrid AI platform: https://platform.qubrid.com/playground?model=anthropic-claude-opus-4-6

Opus 4.7 is marketed as:

The most capable Opus for the hardest coding work

Substantially better multimodal vision (3.75MP vs 1.15MP)

More precise instructions following

Better self-verification before reporting results

👉 Try Claude Opus 4.7 on the Qubrid AI platform: https://platform.qubrid.com/playground?model=anthropic-claude-opus-4-7

Both models target autonomous, long-running agentic tasks. The difference is in execution consistency, visual fidelity, and instruction adherence on the hardest 20% of tasks.

Core Technical Differences

1. Multimodal Vision: 3× Resolution Increase

Opus 4.6:

Maximum image resolution: 1,568 pixels / 1.15 megapixels

Standard vision capabilities for document understanding, screenshots, diagrams

Opus 4.7:

Maximum image resolution: 2,576 pixels / 3.75 megapixels

3.26× increase in total pixel capacity

Model-level change (not an API parameter) all images processed at higher fidelity automatically

What this unlocks:

XBOW, which builds autonomous penetration testing tools, reported a jump from 54.5% to 98.5% on its visual acuity benchmark when moving from Opus 4.6 to Opus 4.7. This is not a small improvement; it's the difference between a model that can't reliably read dense UI screenshots and one that can.

For computer-use agents, life sciences workflows (reading chemical structures, patent diagrams), and any task requiring pixel-level precision, this is the single biggest differentiator between the two models.

2. Tokenizer Update: 1.0–1.35× Token Increase

Opus 4.7 uses a new tokenizer that produces 1.0–1.35× more tokens for the same input compared to Opus 4.6 (up to ~35% more, varying by content type). This is a tradeoff for improved text processing quality.

Migration impact:

If you're running Opus 4.6 in production and migrating to Opus 4.7, you'll need to:

Update

max_tokensparameters to give additional headroomAdjust token budgets and compaction triggers

Monitor token usage on real traffic to measure the actual increase

Anthropic's own testing showed that token efficiency improves when measured as performance per token across all effort levels, meaning the additional tokens buy real capability gains, not just overhead.

3. Instruction Following: Literal vs Loose Interpretation

Opus 4.6:

Interpreted instructions loosely in some cases

Would skip parts of complex multi-step requests

Required more scaffolding and validation prompts

Opus 4.7:

Takes instructions literally and completely

Executes all parts of multi-step requests precisely

May produce unexpected results on prompts written for Opus 4.6

Critical migration note: Prompts optimized for Opus 4.6 may need re-tuning for Opus 4.7. Where earlier models glossed over instructions, Opus 4.7 will execute them exactly. Teams should review and adjust prompts during migration.

4. New Effort Level: xhigh

Opus 4.6 effort levels:

low→medium→high(default) →max

Opus 4.7 effort levels:

low→medium→high→xhigh→max

The new xhigh level sits between high and max, giving finer control over the reasoning depth vs latency tradeoff. In Claude Code, the default effort level was raised to xhigh for all plans.

For coding and agentic use cases, Anthropic recommends starting with high or xhigh on Opus 4.7.

5. Adaptive Thinking: Behavioral Change

Opus 4.6:

Adaptive thinking with configurable budget tokens:

thinking = {"type": "enabled", "budget_tokens": 32000}

Opus 4.7:

Adaptive thinking is the only supported thinking mode

Syntax changed:

thinking = {"type": "adaptive"}withoutput_config = {"effort": "high"}Thinking is off by default — must be explicitly enabled

Thinking content omitted from response by default unless the caller opts in

This is a breaking change for teams using extended thinking on Opus 4.6.

6. Temperature, top_p, top_k: Now Unsupported

Starting with Opus 4.7, setting temperature, top_p, or top_k to any non-default value returns a 400 error. The recommended migration path is to omit these parameters entirely and use prompting to guide behavior instead.

If you were using temperature = 0 for determinism, note that it never guarantees identical outputs. Opus 4.7 removes the parameter entirely.

7. Cybersecurity Safeguards

Opus 4.6:

No specific cybersecurity safeguards

Standard safety profile

Opus 4.7:

First model with real-time cybersecurity safeguards

Requests involving prohibited or high-risk cybersecurity topics trigger automatic refusals

Cyber capabilities were intentionally reduced compared to Mythos Preview

Legitimate security work requires Cyber Verification Program approval

This is part of Anthropic's Project Glasswing framework. The safeguards are designed to block malicious use while allowing verified security professionals to use the model for defensive work.

Benchmark Comparison

Coding and Software Engineering

Benchmark | Opus 4.6 | Opus 4.7 | Source |

|---|---|---|---|

CursorBench | 58% | 70% | Cursor |

Rakuten-SWE-Bench | Baseline | 3× more tasks resolved | Rakuten |

Terminal-Bench 2.0 | State-of-the-art | Not directly compared | Anthropic |

Linear 93-task benchmark | Baseline | +13% resolution lift | Linear |

Factory Droids task success | Baseline | +10–15% lift | Factory AI |

Key insight: Opus 4.7 shows the strongest gains on the hardest coding tasks, the kind that previously required close supervision. It catches logical faults during planning, before execution, and handles tool failures that would stop Opus 4.6 entirely.

Multimodal and Vision

Metric | Opus 4.6 | Opus 4.7 | Improvement |

|---|---|---|---|

Max image resolution | 1,568px / 1.15MP | 2,576px / 3.75MP | 3.26× |

XBOW visual acuity | 54.5% | 98.5% | +44pp / 1.81× |

SWE-bench Multimodal | Baseline | Meaningful improvement | Anthropic |

Key insight: The resolution jump is not incremental it's transformative for computer-use agents, life sciences workflows, and any task requiring pixel-level visual precision.

Document Reasoning and Knowledge Work

Benchmark | Opus 4.6 | Opus 4.7 | Source |

|---|---|---|---|

BigLaw Bench | 90.2% | 90.9% | Harvey |

Databricks OfficeQA Pro errors | Baseline | 21% fewer errors | Databricks |

GDPval-AA | State-of-the-art | State-of-the-art | Anthropic |

Finance Agent | State-of-the-art | State-of-the-art | Anthropic |

Key insight: Both models are best-in-class for knowledge work. Opus 4.7 shows marginal improvements on document reasoning, but the gap is smaller than in coding or vision tasks.

Long-Context Performance

Metric | Opus 4.6 | Opus 4.7 | Notes |

|---|---|---|---|

Context window | 1M tokens (beta) | 1M tokens (standard) | No long-context premium on 4.7 |

Max output tokens | 128k | 128k | Same |

MRCR v2 (8-needle, 1M) | 76% | Not reported | 4.6 had strong long-context retrieval |

Key insight: Opus 4.6 introduced the 1M token window for Opus-class models and showed strong performance on long-context retrieval. Opus 4.7 maintains this capability and removes the long-context premium (previously $10/$37.50 per million tokens above 200k).

What the Early Testers Said

On Opus 4.6

Notion: "The strongest model Anthropic has shipped. Takes complicated requests and actually follows through."

GitHub: "Delivering on the complex, multi-step coding work developers face every day."

Cursor: "The new frontier on long-running tasks from our internal benchmarks."

Harvey: "Achieved the highest BigLaw Bench score of any Claude model at 90.2%."

👉 Try Claude Opus 4.6 on the Qubrid AI platform: https://platform.qubrid.com/playground?model=anthropic-claude-opus-4-6

On Opus 4.7

Linear: "13% lift in resolution rate on our 93-task coding benchmark, including four tasks neither Opus 4.6 nor Sonnet 4.6 could solve."

Cursor: "70% resolution rate on CursorBench versus 58% for Opus 4.6."

XBOW: "Visual acuity went from 54.5% to 98.5% our single biggest pain point with Opus 4.6 effectively disappeared."

Notion: "The first model to pass our implicit-need tests it understands what you need, not just what you wrote."

👉 Try Claude Opus 4.7 on the Qubrid AI platform: https://platform.qubrid.com/playground?model=anthropic-claude-opus-4-7

Use Case Recommendations

When to Use Opus 4.6

Already in production and working well - if your workflows are stable on Opus 4.6 and you haven't hit its limits, there's no urgent reason to migrate

Simple coding tasks - for straightforward, single-step coding work, the performance difference is minimal

No vision-heavy workloads - if your use case doesn't involve images, the vision upgrade won't matter

Temperature/top_p dependencies - if your prompts rely on these parameters, they'll break on Opus 4.7

When to Use Opus 4.7

Hardest 20% of coding tasks - multi-file refactors, debugging sessions, autonomous feature implementations

Computer-use agents - the 3.75MP vision upgrade makes this viable for tasks where Opus 4.6 failed

Life sciences workflows - reading chemical structures, patent diagrams, technical figures

Instruction-heavy workflows - tasks where precise instruction following is critical

New projects - if you're starting fresh, Opus 4.7 is the default choice

Migration Checklist

If you're moving from Opus 4.6 to Opus 4.7:

1. Update API parameters:

Change

thinking = {"type": "enabled", "budget_tokens": X}tothinking = {"type": "adaptive"}Move effort to

output_config = {"effort": "high"}Remove

temperature,top_p,top_kparameters entirelyIncrease

max_tokensby 1.35× as a starting point

2. Re-tune prompts:

Test prompts written for Opus 4.6 may produce different results

Opus 4.7 takes instructions literally; adjust for this behavioral change

Remove scaffolding added to force interim status messages (Opus 4.7 does this by default)

3. Monitor token usage:

Track actual token increase on real traffic (varies 1.0–1.35× by content type)

Adjust token budgets and compaction triggers accordingly

Use the

effortparameter to control token spend if needed

4. Test visual workloads:

If you have vision-heavy tasks, test them early the 3.75MP upgrade may unlock new capabilities

Consider downsampling images before sending if higher fidelity is unnecessary (to avoid token usage spikes)

5. Review cybersecurity use cases:

If your workflow involves security testing, penetration testing, or vulnerability research, apply to the Cyber Verification Program

Non-verified cybersecurity requests may trigger automatic refusals on Opus 4.7

Tone and Style: What Changed

Opus 4.7 has a more direct, opinionated tone compared to Opus 4.6's warmer, validation-forward style. It uses fewer emojis, gives more regular progress updates during long agentic traces, and spawns fewer subagents by default (though this is steerable through prompting).

For teams that built UX around Opus 4.6's communication style, this is worth noting.

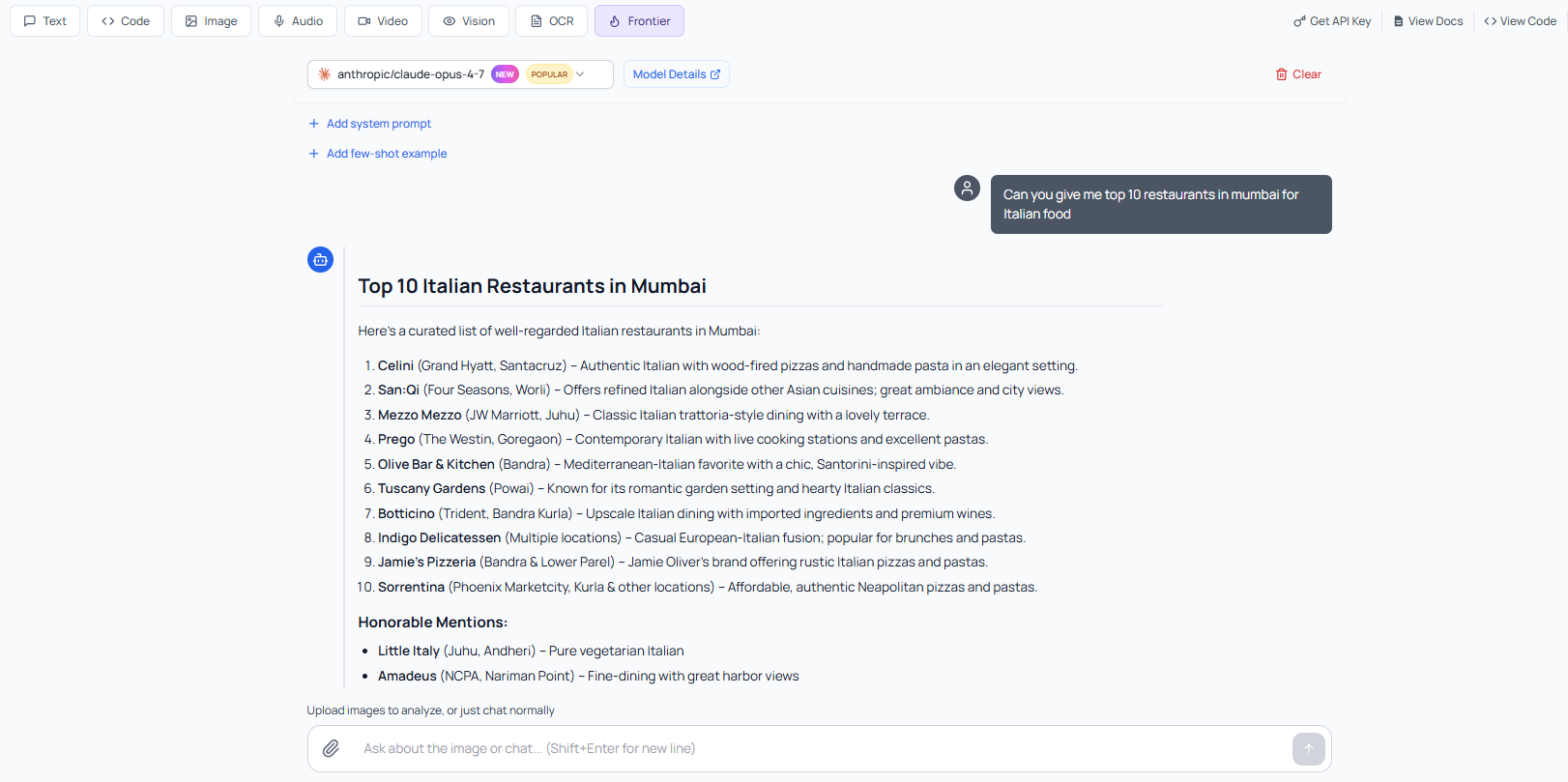

Getting Started on Qubrid AI

Both Claude Opus 4.6 and Opus 4.7 are available on Qubrid AI with no infrastructure setup required. One account, one API key, immediate access to both models for side-by-side comparison.

Step 1: Sign up at platform.qubrid.com

Step 2: Find both models in the Model Catalog and test them in the browser playground. Now, enter the prompt, for example: "Can you give me top 10 restaurants in Mumbai for Italian food"

Step 3: Generate an API key and integrate. Full documentation at docs.platform.qubrid.com

Here's a minimal Python example to compare both models on the same task:

import requests

def call_opus(model, prompt):

response = requests.post(

"https://api.platform.qubrid.com/v1/messages",

headers={

"Authorization": "Bearer YOUR_QUBRID_API_KEY",

"Content-Type": "application/json"

},

json={

"model": model,

"max_tokens": 4096,

"messages": [{"role": "user", "content": prompt}]

}

)

return response.json()["content"][0]["text"]

# Test the same prompt on both models

prompt = "Review this codebase for race conditions and explain any you find."

opus_46_result = call_opus("claude-opus-4-6", prompt)

opus_47_result = call_opus("claude-opus-4-7", prompt)

# Compare outputs

print("Opus 4.6:", opus_46_result)

print("\nOpus 4.7:", opus_47_result)

Summary Comparison Table

Feature | Opus 4.6 | Opus 4.7 | Advantage |

|---|---|---|---|

Release Date | Feb 5, 2026 | Apr 16, 2026 | — |

Max Image Resolution | 1,568px / 1.15MP | 2,576px / 3.75MP | 4.7 (3.26×) |

Tokenizer | Standard | New (1.0–1.35× more tokens) | 4.6 (fewer tokens) |

Instruction Following | Loose interpretation | Literal execution | 4.7 |

Effort Levels | low, medium, high, max | low, medium, high, xhigh, max | 4.7 |

Adaptive Thinking | With budget tokens | Type: adaptive only | Different approaches |

Temperature/top_p/top_k | Supported | Unsupported (400 error) | 4.6 (if needed) |

Cybersecurity Safeguards | None | Real-time blocking | 4.7 (for safety) |

Context Window | 1M (beta, premium pricing) | 1M (standard, no premium) | 4.7 |

CursorBench | 58% | 70% | 4.7 |

XBOW Visual Acuity | 54.5% | 98.5% | 4.7 |

BigLaw Bench | 90.2% | 90.9% | Marginal |

Finance Agent / GDPval-AA | State-of-the-art | State-of-the-art | Tie |

Terminal-Bench 2.0 | State-of-the-art | Not compared | — |

Tone | Warmer, validation-forward | Direct, opinionated | Preference |

Best For | Stable production workflows | Hardest coding + vision tasks | Context-dependent |

Final Thoughts

Opus 4.7 is not a ground-up redesign. It's a targeted upgrade focused on three areas: advanced software engineering on the hardest tasks, high-resolution multimodal vision, and precise instruction following. For teams already running Opus 4.6 successfully, migration is optional, but for new projects or workloads hitting Opus 4.6's limits, Opus 4.7 is the clear choice.

The 3.75MP vision upgrade alone makes Opus 4.7 viable for a class of computer-use and life sciences tasks where Opus 4.6 failed. The coding improvements catching logical faults during planning, handling tool failures, and following complex multi-step instructions address the failure modes that made autonomous AI work unreliable at scale.

On Qubrid AI, you can test both models side-by-side on your hardest problems and decide which one fits your production requirements.

👉 Compare Opus 4.6 vs 4.7 on Qubrid AI: https://platform.qubrid.com/models