NVIDIA Nemotron 3 Nano Omni Pricing, API, Benchmarks & Architecture - on Qubrid AI

👉 Try Nemotron 3 Nano Omni on the Qubrid AI platform: https://platform.qubrid.com/playground?model=nemotron-3-nano-omni

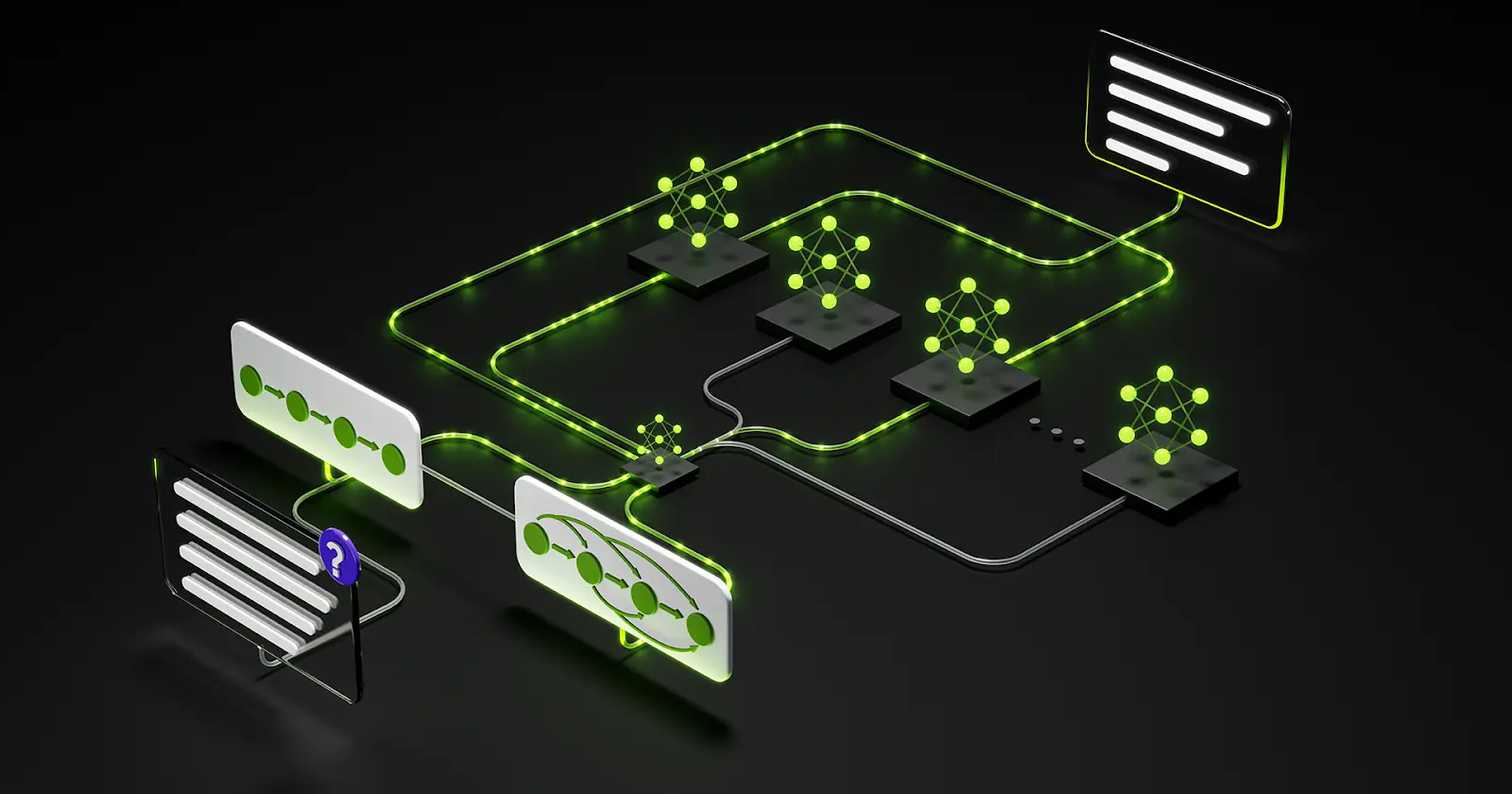

Most AI pipelines are a mess of duct tape. You have one model handling vision, another transcribing audio, and yet another stitching it all together, each hop adding latency, complexity, and cost. If you've built anything resembling an agentic system lately, you've felt this pain firsthand.

NVIDIA just changed the equation. Nemotron 3 Nano Omni is a single open model that natively understands video, audio, images, and text together, no chaining, no fragmented stacks, no orchestration gymnastics. And as an NVIDIA partner, Qubrid AI is bringing it to developers on day one, through a simple chat interface and OpenAI-compatible API.

Let's break down what makes this model special, how it works under the hood, and how you can start using it today.

What is NVIDIA Nemotron 3 Nano Omni?

Nemotron 3 Nano Omni is the latest addition to NVIDIA's Nemotron 3 model family. It is a fully open, 30B hybrid Mixture-of-Experts model designed to function as a multimodal perception sub-agent inside larger agentic systems.

The core idea is this: instead of routing inputs through separate vision, speech, and language models, Nemotron 3 Nano Omni receives all modalities, text, images, video, and audio in a single unified context window. Agents can perceive and reason across all of them in one pass, which dramatically reduces orchestration complexity and inference cost.

It leads industry benchmarks in document intelligence (MMlongbench-Doc, OCRBenchV2), video understanding (WorldSense, DailyOmni), and audio comprehension (VoiceBench). With fully open weights, datasets, and training recipes, it's built for developers who want to understand and customize what they're deploying.

👉 Try Nemotron 3 Nano Omni on the Qubrid AI platform: https://platform.qubrid.com/playground?model=nemotron-3-nano-omni

Key Specifications

Specification | Detail |

|---|---|

Total Parameters | 30B (Active: ~3B per token) |

Architecture | Hybrid Mixture-of-Experts (MoE) |

Core Layers | Mamba + Transformer |

Supported Modalities | Text, Image, Video, Audio |

Vision Encoder | C-RADIOv4-H |

Audio Encoder | NVIDIA Parakeet |

Quantization Support | FP8, NVFP4 |

GPU Support | Ampere, Hopper, Blackwell |

Inference Engines | vLLM, TensorRT-LLM, SGLang |

License | NVIDIA Nemotron Open Model License |

Weights | Fully open on Hugging Face |

The 30B-A3B designation means 30 billion total parameters with only ~3 billion activated per token, giving you the reasoning capacity of a large model at the compute cost of a much smaller one.

How the Hybrid MoE Architecture Works

The architecture behind Nemotron 3 Nano Omni is what separates it from earlier omnimodal models. It combines two complementary layer types in a single language model backbone:

Mamba layers handle long-sequence memory efficiently, maintaining context across many tokens without the quadratic cost of standard attention. Transformer layers handle precise, localized reasoning, the kind you need for factual recall and structured output. Together, this hybrid design delivers up to 4x improved memory and compute efficiency compared to pure-transformer equivalents.

Each modality gets its own dedicated encoder before being fused into this shared backbone. Images and video go through C-RADIOv4-H, a high-resolution vision foundation model that can process entire images while zooming into specific patches for OCR-level detail. For video, a 3D convolutional layer captures temporal motion between frames, and an Efficient Video Sampling (EVS) layer compresses the dense visual token stream so the language model isn't overwhelmed. Audio is handled by NVIDIA's Parakeet encoder, which was trained on data that goes well beyond transcription; it understands speech semantics and context.

Simplified Flow

┌─────────────────────────────────────────────────────┐

│ Input Sources │

│ [Text] [Image/Video] [Audio] │

└──────┬──────────────┬──────────────┬────────────────┘

│ │ │

│ C-RADIOv4-H + EVS Parakeet Encoder

│ (3D Conv) │

└──────────────┴──────────────┘

│

┌────────────▼──────────────┐

│ Gating Network (MoE) │

│ Selects active experts │

└────────────┬──────────────┘

│

┌────────────▼──────────────┐

│ Hybrid Backbone │

│ Mamba (memory) + │

│ Transformer (reasoning) │

└────────────┬──────────────┘

│

Unified Output

The gating network dynamically selects which expert sub-networks to activate for each token, which means the model specializes per-task without requiring separate models per modality.

Key Features

1. Unified Multimodal Context

Unlike previous architectures that bolt modalities together as an afterthought, Nemotron 3 Nano Omni was designed from the ground up for cross-modal reasoning. A single agent can watch a video, hear its audio, read associated documents, and produce a coherent analysis all in one context window, with no loss of cross-modal coherence.

2. Best-in-Class Document Intelligence

On MMlongbench-Doc and OCRBenchV2, Nemotron 3 Nano Omni leads all open omnimodal models. Its tiered visual compression strategy lets it maintain high-resolution OCR precision on complex multi-page documents while keeping inference costs manageable. This matters enormously for finance, legal, and healthcare workflows where document accuracy is non-negotiable.

3. Efficient Video Sampling at Scale

Processing video at scale is traditionally expensive. The model's EVS (Efficient Video Sampling) layer compresses high-density frame tokens into a concise representation that the LLM can process without sacrificing temporal understanding. Combined with 3D convolutions for motion capture, this makes Nemotron 3 Nano Omni practical for production video pipelines, not just demos.

4. RL-Trained for Agent Robustness

Post-SFT reinforcement learning across 25 environment configurations covering visual grounding, chart understanding, STEM problems, video comprehension, and ASR gives the model the robustness needed for real agentic workflows. This isn't a model trained to pass benchmarks; it was trained to work in messy, real-world environments.

5. Fully Open Stack

Model weights, training datasets (~127B multimodal pretraining tokens), post-training recipes, and RL environments are all publicly available. Developers can reproduce training, fine-tune domain-specific variants, and deploy anywhere on-premises, cloud, or edge. The NVIDIA Nemotron Open Model License gives enterprises the data control they need.

Benchmark Performance

Document Intelligence

Benchmark | What It Measures | Result |

|---|---|---|

MMlongbench-Doc | Long-document understanding | #1 among open omni models |

OCRBenchV2 | OCR accuracy across document types | #1 among open omni models |

Video and Audio Understanding

Benchmark | What It Measures | Result |

|---|---|---|

WorldSense | Temporal world understanding from video | Leading open omni score |

DailyOmni | Everyday video + audio reasoning | Leading open omni score |

VoiceBench | Speech comprehension beyond transcription | Leading open omni score |

Throughput Efficiency (MediaPerf)

This is where Nemotron 3 Nano Omni stands out most dramatically. MediaPerf evaluates models under a fixed interactivity threshold, holding per-user tokens-per-second constant, and measuring total sustainable system throughput.

Workload | vs. Comparable Open Omni Model |

|---|---|

Video reasoning | Up to ~9.2× more effective system capacity |

Multi-document reasoning | Up to ~7.4× more effective system capacity |

On Blackwell GPUs with NVFP4 quantization, the model achieves the highest throughput among open omnimodal models for enterprise-grade document, video, and audio workloads. This isn't a raw benchmark artifact; it reflects what happens when you consolidate a three-model pipeline into one efficiently gated expert architecture.

Getting Started on Qubrid AI

As an NVIDIA partner, Qubrid AI brings Nemotron 3 Nano Omni to developers without any of the infrastructure overhead. No GPU provisioning, no framework configuration, just start experimenting.

Step 1: Create a Qubrid AI Account

Sign up at qubrid.com. Start with a $5 top-up and get $1 in free tokens to explore the platform and run real workloads from day one.

Step 2: Try in the chat interface

Head to the Nemotron 3 Nano Omni on the chat interface and start interacting with the model directly in your browser. Upload images or documents, adjust parameters like temperature and max tokens, and see multimodal reasoning in action before writing a single line of code.

Step 3: Integrate via API (Optional)

When you're ready to build, Qubrid uses an OpenAI-compatible API. Drop it into any existing application with minimal changes:

from openai import OpenAI

# Initialize the OpenAI client with Qubrid base URL

client = OpenAI(

base_url="https://platform.qubrid.com/v1",

api_key="QUBRID_API_KEY",

)

response = client.chat.completions.create(

model="nvidia/Nemotron-3-Nano-Omni",

messages=[

{

"role": "user",

"content": "Summarize this support ticket into bullet-point next steps for the agent."

}

],

max_tokens=500,

temperature=0.7

)

print(response.choices[0].message.content)The same API pattern works whether you're sending text, images, or building multi-turn agents.

Practical Use Cases

Enterprise Document Processing - Combine OCR-level accuracy with semantic reasoning to extract, summarize, and flag information from contracts, reports, financial filings, and regulatory documents. Nemotron 3 Nano Omni doesn't just read the text; it understands the structure and context.

Video Content Intelligence - Build pipelines that watch video, transcribe audio with contextual understanding, identify on-screen text, and generate structured metadata all in a single model call. Ideal for media companies, ad-tech platforms, and content moderation systems.

Multimodal Agentic Systems - Use Nemotron 3 Nano Omni as the perception sub-agent in a larger system alongside planning models like Nemotron 3 Super or Ultra. The model handles what the agent sees and hears; the planning model decides what to do next.

Clinical and Research Analysis - Process medical imaging, patient records, and audio consultation notes in unified workflows. The model's strong document understanding and cross-modal context make it well-suited for research summarization and clinical data extraction.

Computer Use and UI Agents - Screenshot-based agents can use the model's high-resolution visual processing and temporal reasoning to understand application state, navigate interfaces, and complete multi-step workflows.

Why Developers Use Qubrid AI

Running a 30B omnimodal model locally is not a realistic option for most teams. Qubrid makes the infrastructure invisible so you can focus on the application layer.

The platform runs on high-performance GPUs with low-latency inference already tuned for models like Nemotron 3 Nano Omni. A unified API means you can swap between models Nemotron, Kimi K2.5, Qwen, and others without rewriting integration code. The playground-to-production workflow is direct: test prompts in the browser, copy the configuration to your API call, and deploy.

As an NVIDIA partner, Qubrid is committed to bringing you NVIDIA’s latest models, like Nemotron 3 Nano Omni, as soon as they launch, making them available on Qubrid as quickly as possible.

👉 Try Nemotron 3 Nano Omni on the Qubrid AI platform: https://platform.qubrid.com/playground?model=nemotron-3-nano-omni

Our Thoughts

Nemotron 3 Nano Omni is a meaningful architectural step, not just a benchmark bump. The shift from chained modality models to a single unified perception loop solves a real engineering problem: cross-modal context degrades every time you hand off between models. By keeping everything in one shared context, the model can reason across modalities the same way a human does, seeing, hearing, and reading simultaneously.

The throughput efficiency numbers (9.2× in video, 7.4× in multi-document reasoning at matched interactivity thresholds) are significant for anyone running at scale. That's not a cherry-picked lab result; it's what happens when you replace three inference hops with one.

The fully open stack includes weights, data, training recipes, and RL environments, making this serious infrastructure for serious developers. If you're building production agentic systems that touch documents, video, or audio, this model is worth your attention.

👉 Explore all available models at platform.qubrid.com/models